1. User Data Security and Privacy

The use of AI for personal information means that companies must abide by these requirements regarding protecting that personal data:

End-to-End Encryption

Secure Storage (on-premise or cloud based)

Compliance with laws such as GDPR

Through these requirements, user data is confidential and secure from breach.

2. Keep Unauthorized Users Out

Developers use a variety of security measures to stop anyone from accessing their systems without permission. These include:

Role-based Access Control

Multi-Factor Authentication

API Security Protocols

? This ensures that only those with permission to access the AI system have access to it.

3. Testing and Validating Models

Prior to deploying an AI agent, it will be subjected to a stringent testing regimen. This includes:

Performance Testing

Bias Detection

Accuracy Verification

? The result is that there will be fewer chances of producing an output that is incorrect or potentially dangerous.

4. Development of AIs with Ethics

The responsible development of AIs involves the following:

Techniques to mitigate BIases

Testing for Fairness

Translucent methods of Decision-Making

All ensuring the AI will act in an ethical way, and will not discriminate.

5. Continuous Monitoring and Updating

Following their deployment, AI is systematically reviewed and monitored:

Real-time monitoring of performance;

Error detection mechanisms;

Periodic updates of the entire model.

All of these will keep the system secure and up to date to current threats.

6. Detection & Prevention of Threats

These sophisticated technologies can detect any potential number of threats, such as, but not limited to:

Cyber Attacks

Data Manipulation

Unauthorised Actions

? All helping to respond efficiently and quickly to and preventing Security Incidents from occurring.

7. Compliance Quality Standards

To comply with applicable laws and standards of practice for their respective industries, a company must implement the following:

Data Protection Procedures

AI/ML Usage Policies

Audit Readiness

Promotes Trust with clients and users.

8. Securely Implementing Infrastructure for AI/ML Agents

When deploying AI/ML Agents, companies typically use secure cloud environments, containerization tools like Docker or Kubernetes, and network firewalls to ensure that there are no external vulnerabilities to their systems.

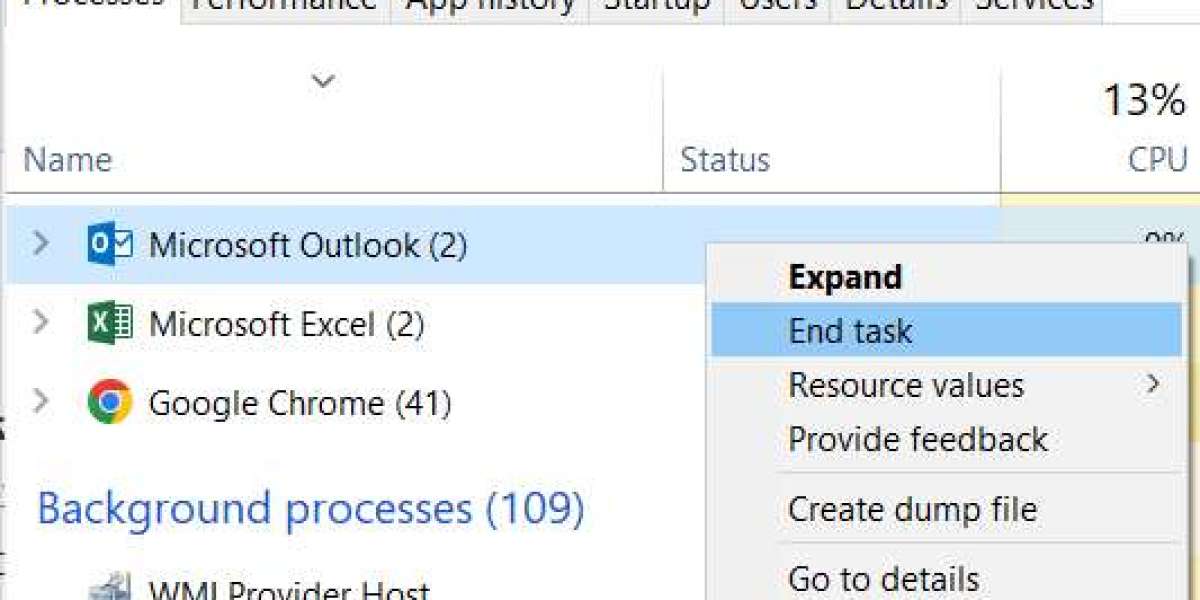

9. Human Oversight and Control of the AI/ML Workflow.

While many new AI/ML systems are very sophisticated, most have some form of:

Human-in-the-loop mechanisms

Manual Overrides

This ensures that all critical decisions made by the AI/ML Agents are reviewed by a human.

Conclusion

AI agent development must first be developed safely. Strong security practices with ethically established guidelines will also be a part of the development process, while continual monitoring of an organization's AI systems helps to ensure that they can be relied upon as safe, effective, and trustworthy. Developers will need to maintain these same strategies as they grow/develop their AI solutions, therefore ensuring that their AI solutions will continue to provide confidence and ultimately long term success into the future.

For more details:https://www.clarisco.com/ai-agent-development-company

WhatsApp: https://shorturl.at/4rRF6